AMD Stock Breakdown

Get smarter on investing, business, and personal finance in 5 minutes.

Stock Breakdown

Looks like with this week’s Five Minute Money, we failed again… it will probably take you 10 minutes to read this AMD deep dive—hopefully time well spent!

It’s not so often that a business that was irrelevant and edging towards bankruptcy makes a comeback…

AMD was a $3 stock when CEO Lisa Su took over.

At best it was a second source supplier for the far superior Intel CPUs.

But in one decade they not only took the lead from Intel...

They took a huge chunk of their revenues.

Intel has lost $25 billion in revenue since 2020.

And AMD grew the same amount…

By outsourcing chip manufacturing to TSMC, they have been able to get the best of both worlds.

They took process technology leadership (by relying on TSMC to manufacture the chips, whereas Intel manufactures their own chips in-house)

And they became a more capital light business in the process by focusing on design.

This is the same thing Nvidia does.

Their market cap increased 100x to $300 billion

And they continue to grow extremely fast.

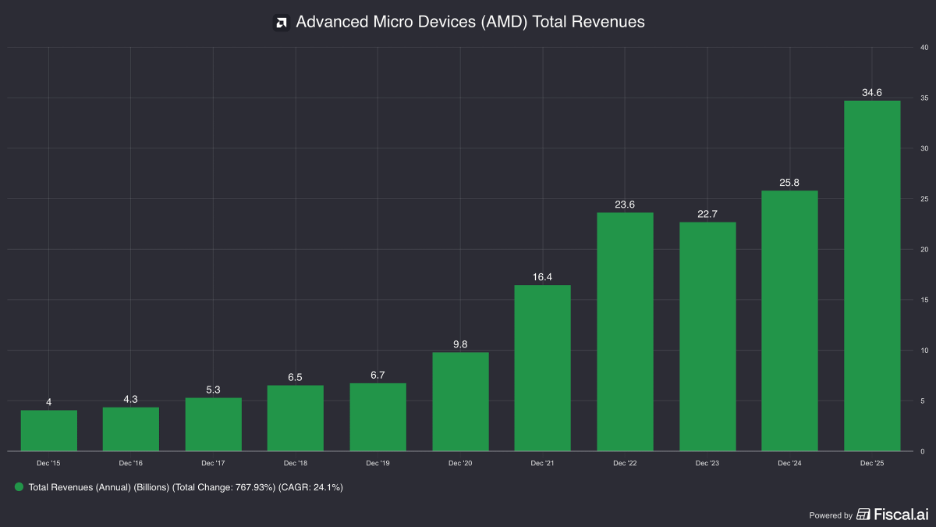

Revenues last year grew +35%

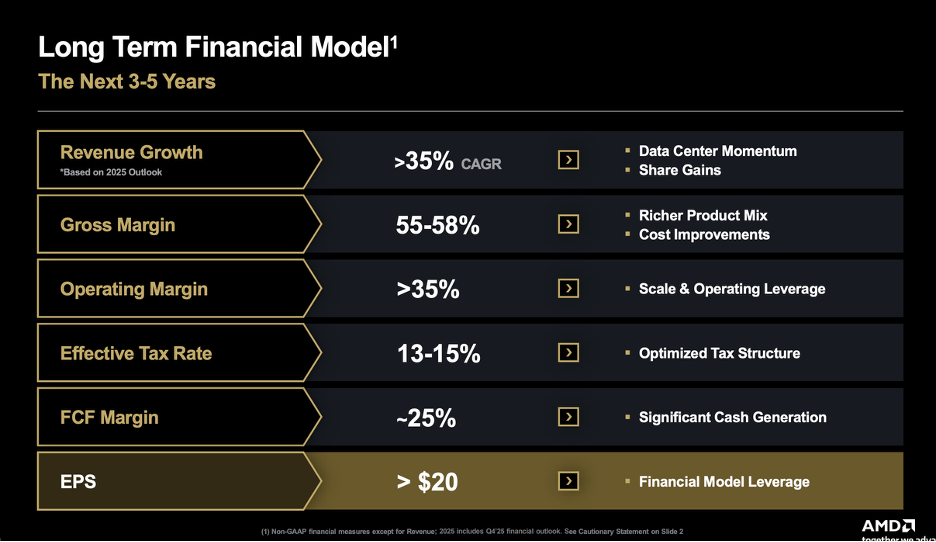

And there are guiding for a continued 35% growth rate for the next 3-5 years!

However, the stock isn’t cheap at 65x trailing free cash flow.

But if they can achieve their goals…

They will trade at 10x future earnings.

So, what is AMD’s advantage versus Intel and how sustainable is it?

And will they also be able to challenge Nvidia for some GPU market share?

We cover all of this and more in this week's Five Minute Money.

Business.

In total, they generate $35 billion in revenues, which are growing 35% y/y.

They have 35% gross margins (versus Nvidia at 70% and Intel also at 35%).

Their operating margins are 10% as R&D as a % of sales is a whopping 23%.

Operating income is $3.7 billion, up 45% y/y

Free cash flow is $5 billion (after treating stock based comp as an expense)

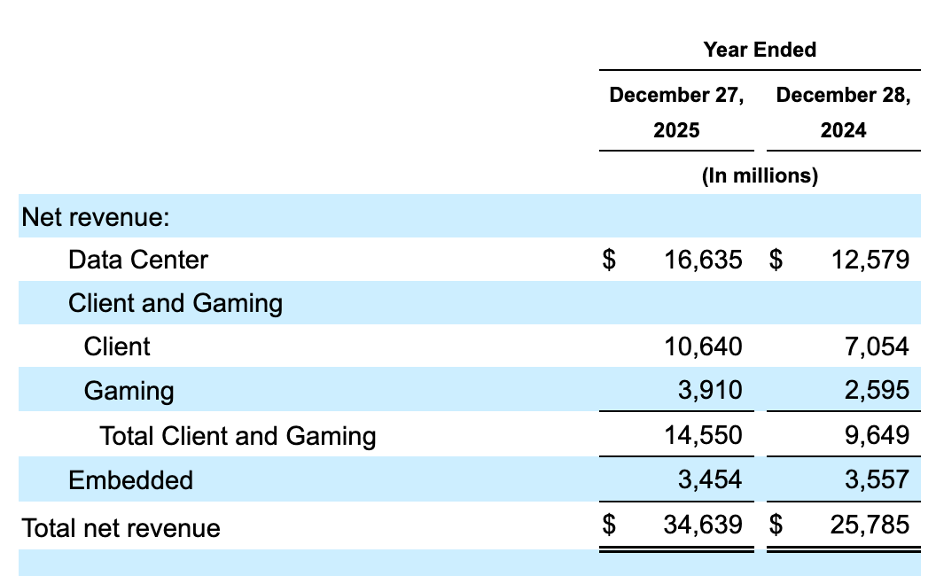

They have 4 product groups: 1) Data Center, 2) Client, 3) Gaming, and 4) Embedded.

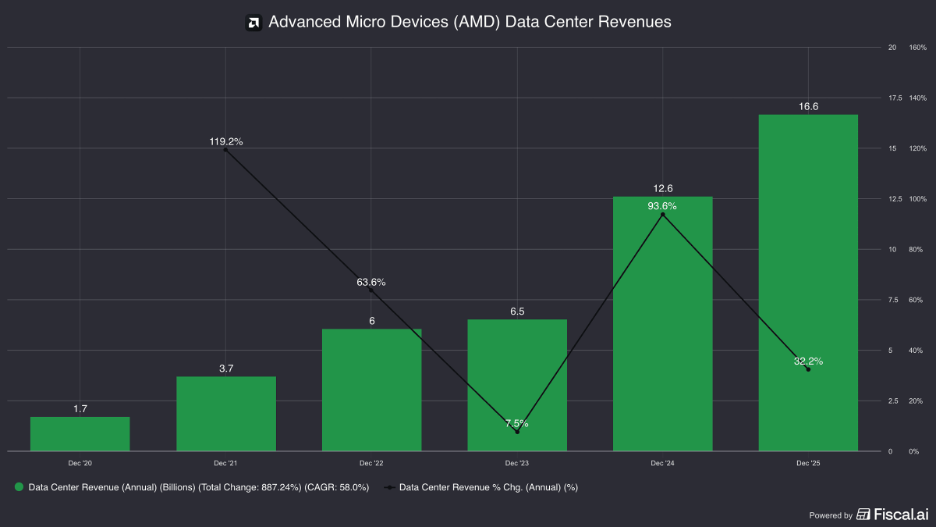

Data Center.

This is their largest segment, representing 48% of revenues and growing over 30% y/y.

And with several large deals with OpenAI and Meta, they think this segment will grow even faster in the future.

There are two major product categories here:

1) EPYC, which are Server CPUs

2) Instinct, which are AI / High Performance Computing (HPC) GPUs

These are the processors that power cloud servers and enterprise data centers.

We will touch back on them momentarily.

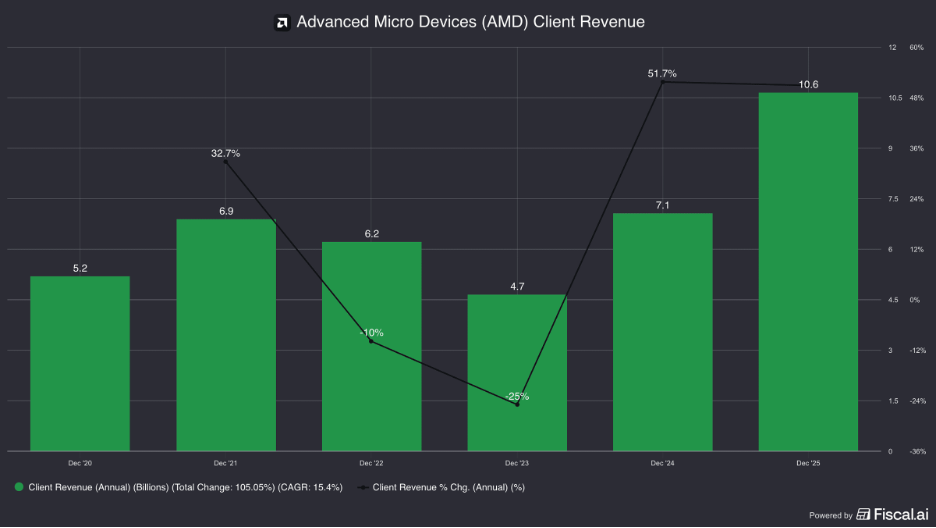

Client and Gaming.

Next is client and gaming, which is 42% of the business.

Client is their PC and notebook business and mostly consist of Ryzen CPUs.

The client business alone is 30% of revenues.

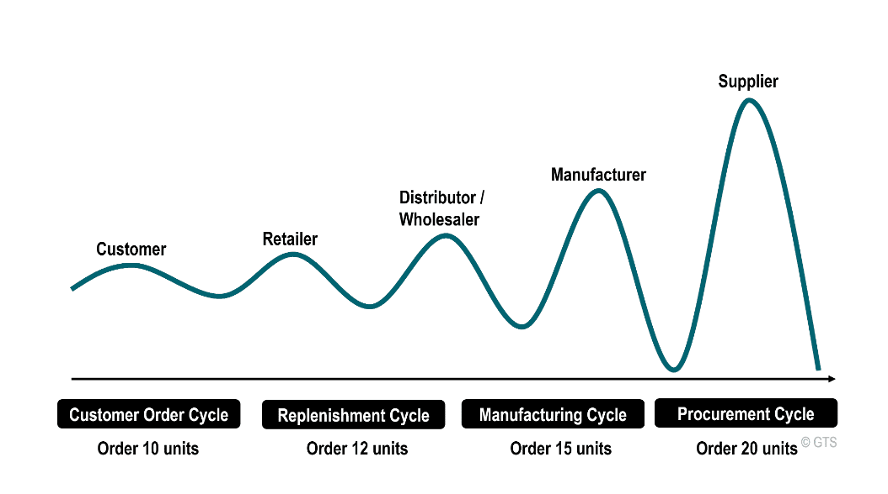

This is a more volatile business because it is tied to consumer and business demand, which can be cyclical.

There is an added issue of channel partners carrying inventory and not wanting to buy more when demand is soft, so they will work down existing inventories.

In 2022 and 2023 for example revenues fell -10% and then -25%, respectively.

It is notoriously hard to estimate this demand because consumers and businesses can defer computer or notebook purchases and channel partners carry their own inventory that is hard to have full visibility into.

Competition here namely comes from Intel.

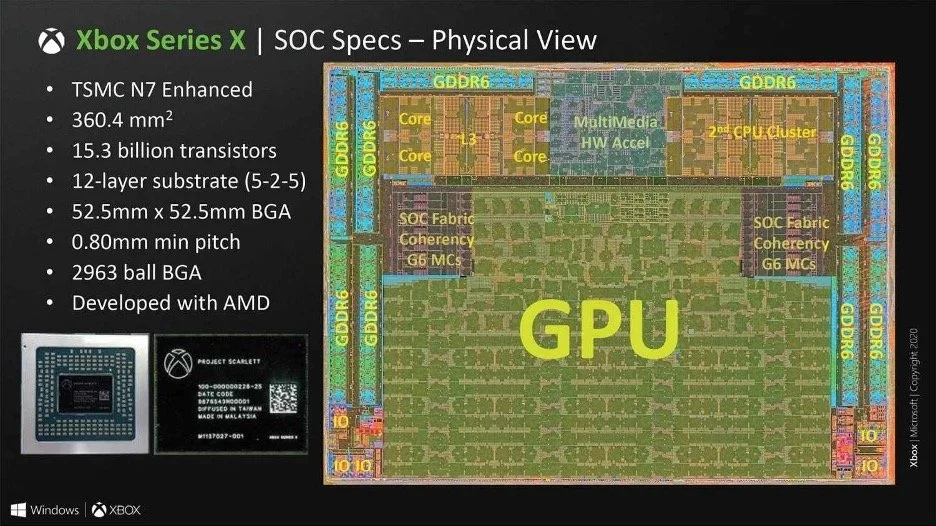

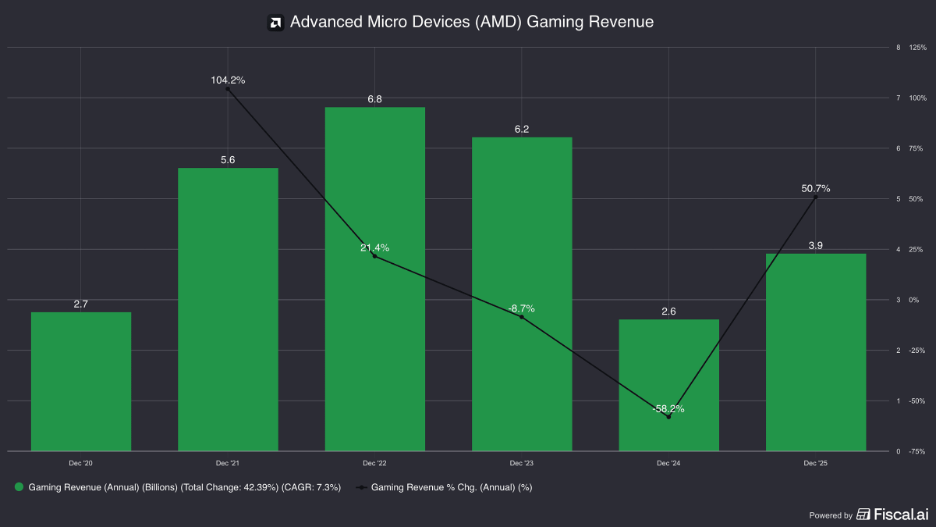

Their gaming business is 11% of total revenues.

This includes their Radeon GPUs and custom SOC (systems on a chip).

AMD makes semi-custom SOC for Microsoft’s Xbox and Sony’s PlayStation which tie together their GPUs with a x86 CPU.

Nvidia has higher end GPUs (I.e. graphics cards) but AMD has won this market by leveraging their CPUs together (and is also cheaper).

They also sell stand-alone Radeon GPUs for PCs, but Nvidia has higher end chips here (GeForce).

As you can imagine, this is also a very volatile business as it is tied to the console cycle.

In 2022 gaming revenues were $6.8 billion and hit a low of $2.6 billion in 2024.

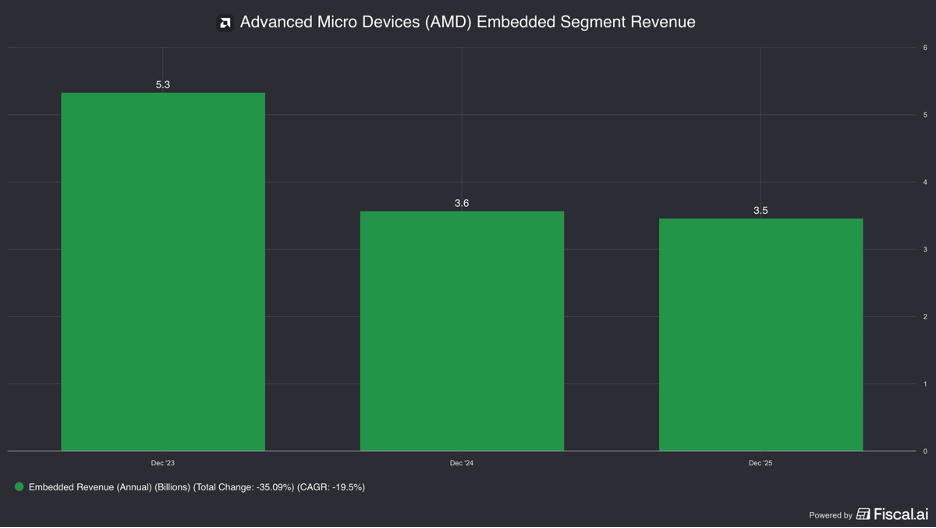

Embedded.

Their last segment is 10% of revenues.

This segment includes a lot of their $50 billion acquisition of Xilinx.

Xilinix invented something called an FPGA (field programmable gate array).

These are chips that can be programmed after they are manufactured.

This segment includes those chips plus “Adaptive SoCs”, embedded Ryzens, and embedded EPYC chips.

Essentially, these are all modified versions of their chips that are inside various larger equipment or machines. Think: robotic arms in factories, vehicle dashboards and infotainment systems, satellites, heavy industrial equipment, ultrasound machines, and in 5G cell towers.

They are designed to work for a long time without issue.

This is still volatile business as well as businesses’ capex budgets can be slashed in downturns.

2023 revenues were $5.3 billion versus $3.4 billion in 2025.

This also has to do with something called the bullwhip effect.

When there was the supply chain shortage from Covid, companies started double ordering and building up inventory to make sure they didn’t have a shortage.

There after they had high inventories that they needed to work down.

Generally speaking, this business should be more diversified than client or gaming because of the very diverse end markets, but we see in reality that isn’t always the case.

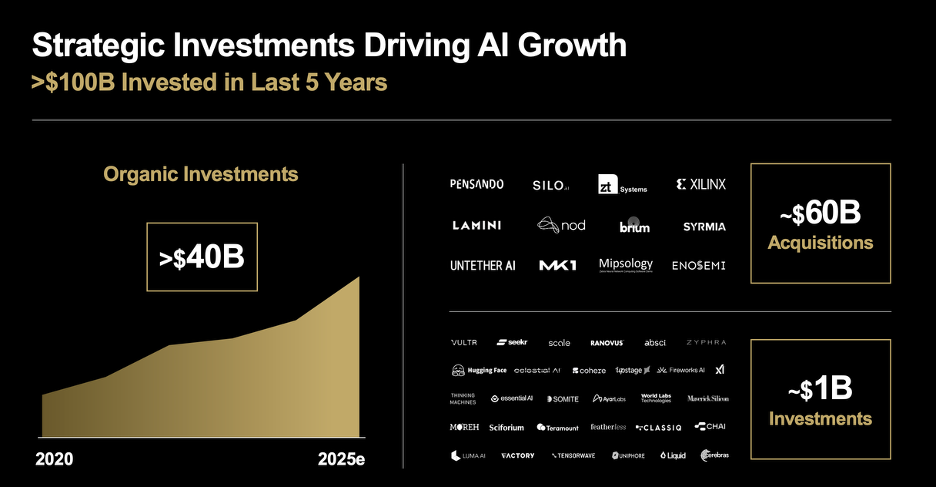

As of late, AMD has really focused on data center though and made three important acquisitions to improve their position there.

We will touch on those and then move into more product level explanations, which will tie into competition.

Acquisitions.

Xilinx.

Their biggest acquisition was already mentioned: Xilinx in 2022 for $50 billion.

This gave them a leading position with FGPA (field gate programmable arrays).

These are important for cloud providers because it allows them to hard code changes at the hardware level that a CPU would be too slow or compute intensive to do or a GPU would be too energy inefficient for.

This, for example, can allow them to adapt to new AI models or video formats instantly without having to spend billions ripping out and replacing obsolete hardware.

It also gave them a bit of product diversification and end market diversity, while also building relationships with new customers

Intel acquired Altera to enter the FPGA market and so there was an aspect where this was defensive too.

The other part of the story is it got the adaptive SOC.

These are chips that combine a CPU with a FGPA and an AI Digital Signal Processor (DSP). This is important for “edge compute”, that is processing that happens on device.

They integrated their Ryzen CPUs with these so they could offer an AI PC product.

Pensando.

AMD acquired Pensando for $1.9 billion in 2022.

This gave them more networking capability for data centers, which moving the data between servers.

Pensando makes a DPU (data processing unit), which can take over a lot of the networking, security, and storage-related work that would otherwise burden the server’s main CPU.

ZT Systems.

This was a 2024 acquisition for $4.9 billion.

This was a company that built the actual physical server infrastructure for the world's largest hyperscale cloud providers, like Microsoft and Amazon.

They were the experts at taking raw computer chips and wiring them into massive, liquid-cooled data center racks.

This gave AMD 1,000 systems design engineers and the ability to sell full AI server racks, just like Nvidia does.

Now let us move into competition through the lens of their data center products starting with the EPYC, their data center CPU.

This should also help clarify any confusion.

Competition (and where AMD wins).

EPYC.

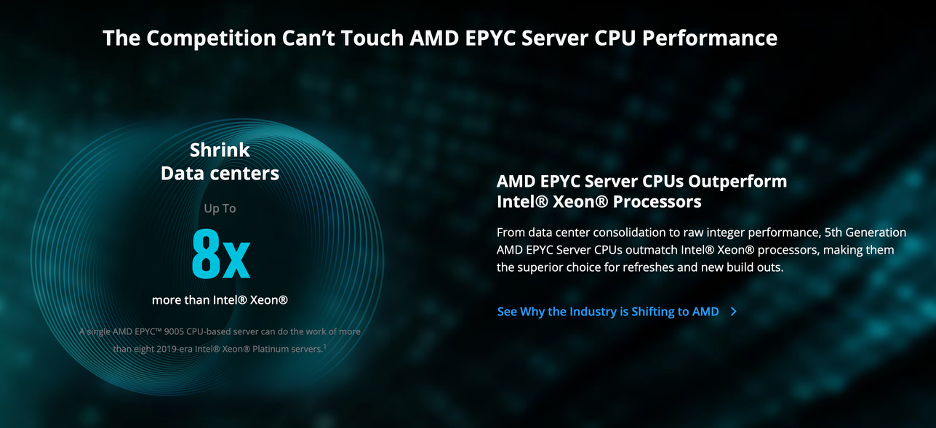

The key to their success has been creating a chip that allows data centers to do more “work” per server.

Work here can mean more VMs (virtual machines) and containers per server.

VMs (virtual machines) are full software-based computers running on one physical server, while containers isolate and run individual applications without needing a full separate operating system each time.

This is key to understanding why AMD’s EPYC took share.

They typically offer more cores (processing units inside the CPU), which helps run more workloads at once.

Cores are the individual processing units inside a CPU that allow it to handle multiple tasks at the same time.

It also has strong performance-per-watt (how much computing you get for each unit of power).

EPYC also supports lots of memory bandwidth (how quickly data moves between memory and the chip) and PCIe (Peripheral Component Interconnect Express — the high-speed lanes connecting chips to GPUs, SSDs, and networking gear).

That means with EPYC, hyperscalers and data centers are getting more VMs and containers per server, plus more room for accelerators and storage.

The result is lower TCO (total cost of ownership) through fewer servers, less power, and less rack space.

AMD made a design decision to manufacture “chiplets”, which basically means building one big chip out of several smaller chips instead of one giant piece of silicon.

This made it easier for them to build chips with more cores (read faster).

And that is a key reason why they have been able to take share from Intel, who used to have 95% share in data center.

So while the EPYC CPU Server chips have been gaining share in datacenters…

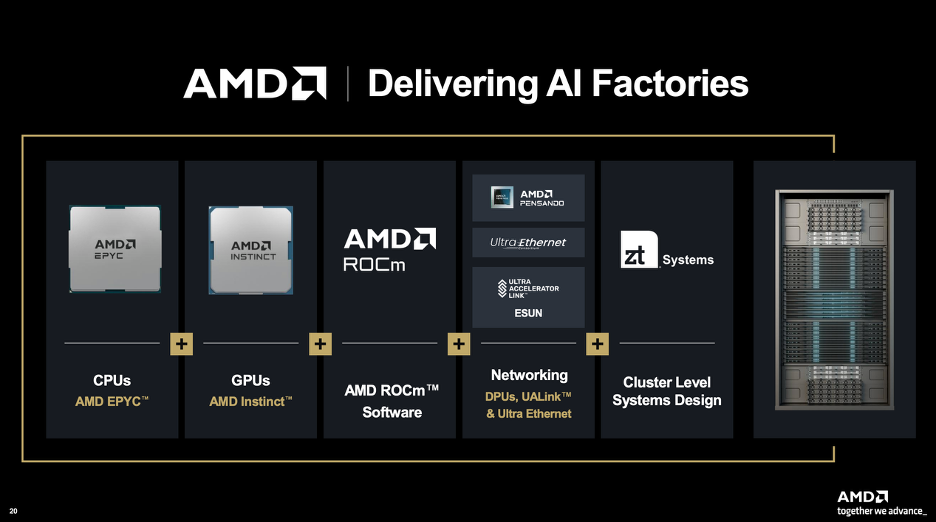

AMD’s Instinct GPU is an up-and-coming competitor to Nvidia.

Now, Nvidia has a strong lead and moat against other GPU providers.

But if we just isolate comparing chip to chip…

AMD Instinct has largely caught up to Nvidia’s last generation Hopper chip and is competitive with Blackwell at the chip-spec level, especially on memory capacity and bandwidth.

But it is about much more than just the raw chip aspects, it also about the full platform—how well the GPUs scale together, the interconnect and networking, the software stack, and how easy it is to deploy and operate at production scale.

This is where Nvidia really wins.

Multi-GPU scaling: NVIDIA’s edge is not just the chip, but how well many GPUs work together as one larger system. Its NVLink (high-speed GPU-to-GPU interconnect) and NVSwitch (switching fabric linking many GPUs) help large AI clusters move data quickly, which is critical for training and inference.

NVLink helps all the GPUs talk quickly together and NVSwitch coordinates it all

Full platform approach: NVIDIA is not just selling GPUs though, but a full stack including HGX (AI server platform), DGX (integrated AI system), networking, interconnect, and software. That makes it easier for customers to buy a more turnkey solution.

Instead of the customer putting everything together themselves, Nvidia will sell you an entire server that is already set up

The DGX B200 for instance has 8 B200 (Blackwell) GPUs, 2 Intel Xeons CPUs, storage, 2 NVSwitch chips and NVLink in the box with another software layer on top.

Note that Nvidia is still relying on Intel for CPUs, which is where their partnership comes into hand.

HGX is similar except it is more just the server board itself with 8 B200 with NVLink

CUDA and software maturity: NVIDIA’s CUDA (its GPU software platform) has the deepest ecosystem, libraries, and tooling in AI. That reduces the engineering work needed to get high performance from large clusters.

Developers usually write AI code in frameworks like PyTorch, JAX, or TensorFlow, then point that code at NVIDIA GPUs with commands like device="CUDA", which tells the framework to run the work through NVIDIA’s CUDA software stack.

Since so much of the AI ecosystem has been built and optimized around CUDA, customers often need less engineering work to get high performance out of NVIDIA systems.

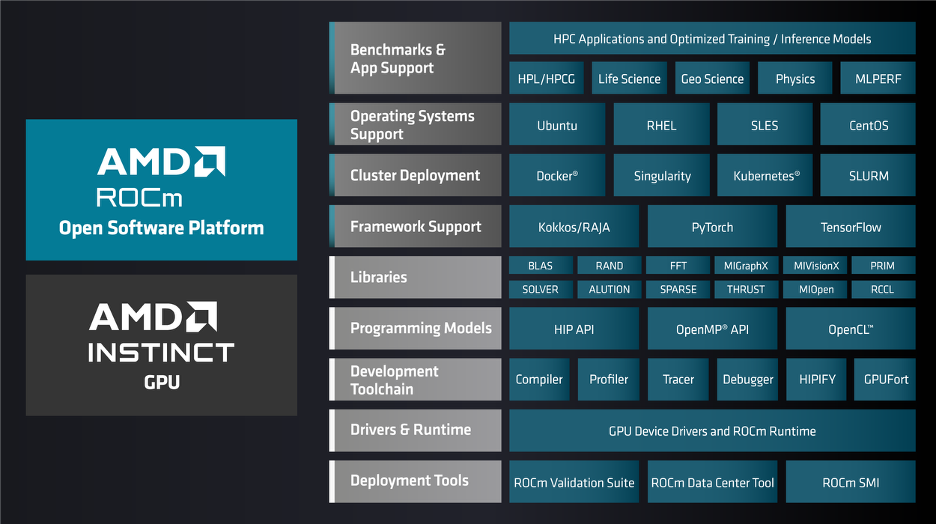

Deployment maturity: NVIDIA’s offering is more plug-and-play at production scale. AMD’s ROCm (its open GPU software stack) is improving, but customers may still need more tuning, validation, and optimization to get the most out of Instinct systems.

Nvidia has software called Base Command Manager and Mission Control, which helps customers manage, monitor, and orchestrate large GPU clusters in production rather than configuring and operating every system manually.

AMD has their own platform, but it is less a “turnkey” solution and a more open, partner-driven platform built around Instinct GPUs, EPYC CPUs, Pensando networking, and ROCm software. AMD’s upcoming Helios rack-scale platform is the clearest example of that approach.

The Helios rack is a physical manifestation of why they bought ZT Systems. It allows them to put together:

· 72 AMD Instinct GPUs

· Next Gen EPYC CPUs

· Pensado’s Networking card

· Built-in liquid cooling infrastructure

Now AMD can also sell a fully finished “rack”.

AMD actually designed this with input from Meta and then donated the blueprint to the “Open Compute Project”.

Instead of a fully proprietary system, it fits into standard data centers without customization needed, with standardized networking instead of a proprietary cable like NVlink.

Since it's open source, vendors can mix and match parts if they wish (namely swapping out the infrastructure around it—networking and cooling).

The other aspect of AMD being more open is ROCm.

NVIDIA’s CUDA is a closed, proprietary software ecosystem: it is highly polished and widely used, but developers cannot inspect or modify the underlying code and are effectively locked into NVIDIA hardware.

AMD’s ROCm, by contrast, is open-source and publicly available on GitHub, allowing developers to inspect, modify, and customize the stack without licensing fees.

All of this is why AMD tends to be a partner of choice for the largest customers, who are spending enough money that it could be worth them customizing AMD’s products to make them better suited for their use.

Meta partnered with AMD to deploy 6 gigawatts of power (about 3 million GPUs) through 2030 in a deal worth an estimated $60 billion. Meta will co-engineer a custom GPU chip with AMD that they intend to use for inference.

As part of the deal though, AMD will issue warrants to Meta at $0.01 that could give them ownership of up to 10% of the business. They only receive the warrants if they make the purchases though and AMD stock hits various milestones (the highest is at $600).

They also have a very similar partnership with OpenAI for 6 gigawatts of power and warrants that could represent 10% of their business.

OpenAI wants to work to make their software—Triton, which is an open source competitor to CUDA and runs on top of ROCm—runs flawlessly on AMD hardware.

The goal for AMD here is to establish themselves more in these large customers data centers, knowing that if these partnerships work they are likely to buy much more from them in the future than Nvidia.

This is because no one wants to be beholden to a single source supplier and so despite Nvidia’s superior products, they are trying to support AMD to create something that works for them, is cost effective, and can ween them off of Nvidia dependence.

Nvidia has 60% operating margins, not gross margins, so they are clearly benefiting from the markets total dependence on them.

This is what AMD (and Nvidia customers) are trying to crack.

And AMD hopes that as workloads move to more inference rather than training, they are better positioned.

Inference takes a lot of memory and AMD has been cramming it into their GPUs.

With top tier performance less important for most inference, AMD is often more cost effective.

Which could play to AMD’s advantage.

Why Nvidia?

Having said all of this, Nvidia’s real world performance is consistently higher.

They have been at this for over 2 decades and had much more time to optimize their CUDA library to their GPUs.

Where AMD still trails in this “deployment maturity” and their offerings still require more customer side tuning—which is fine for a Meta or OpenAI, but not for many others.

Nvidia also might be able to get by with less memory because their software is more efficient. This is one of the benefits of an integrated ecosystem.

Nvidia still has design leadership and has recently accelerated from a 2 year chip cycle to 1 year.

Lastly, Nvidia still has a bigger lead with their ecosystem, and CUDA is the most prominently used GPU software platform.

It is hard to switch from CUDA though because frameworks like Pytorch and Jax reference specific CUDA libraries.

The CUDA references correspond to actual movements at the chip level, telling a GPU how to run operations and move memory around in the real world.

AMD’s ROCm for instance tends to group threads (read work) into groupings of 64, whereas Nvidia’s CUDA does it in 32. If you have written custom code for CUDA then it is optimized for a Nvidia chip and you cannot run that as effectively on an AMD chip without changing the code.

For example, when an AI engineer writes a custom CUDA kernel, they are explicitly telling the chip: "Take this exact block of data from the slow global memory, move it into the ultra-fast L1 cache next to the processor, do the math, and put it back."

Now AMD is trying to fight this CUDA lock in with HIPIFY, which is their translator program that turns Nvidia CUDA script into AMD ROCm vocabulary.

The problem is it can only translate syntax, not the actual physical logic. It can’t rewrite something optimized for 32-threads to 64. So a human is still required to rewrite a lot of this. (AMD explicitly says that HIPIFY is not a drop-in replacement).

Now a key risk to CUDA and potential benefit for AMD is if AI can do this rewriting of actual logic.

This is currently outside of the realm of what Claude Code because while it can translate the code and get a sense of the logic, it doesn’t translate to the same level of chip efficiency as CUDA. It is especially hard because writing the logic a certain way doesn’t always translate to real world performance, which is part of what Nvidia learned when tuning CUDA over the past decades.

Intel is Still Swinging.

Intel has had a lot of government backing as well as support from customers like Apple to regain their leading manufacturing position from TSMC.

Now whether that happens or not, is not for us to discuss now.

But what is important to note is that they are receiving a lot of support to increase their competitiveness.

Partly this is for fears that TSMC gets taken out in a war, but also because customers don’t want to be beholden to just TSMC.

Nvida also struck a partnership with Intel to link their GPUs to their CPUs for the PC market. They also are partnering for custom Intel Xeon chips in their racks for customers who want to stay on Intel.

It’s not all good news for Intel though... Nvidia also rolled out their own ARM-based CPU called Vera.

No matter, Intel’s data center market share has stabilized for the first time in 5 years.

And it seems that Intel is more focused than they have been in the past, making further market share gains harder.

The Cycle.

The Biggest risk though is that chips are still a cyclical business.

While we may be going through a super cycle and it is unclear when it will end—it could take many years, maybe even a decade…

But capex on data centers will eventually slow...

And when they do their earnings are at risk of crashing down.

It is very hard to know what data center demand is going to be in 3 years from now, let alone 10.

That makes this an inherently hard business to forecast.

For now though AMD sees strong visibility of an 60% CAGR in their data center business for the next 3-5 years, backed by large contracts.

The concern is of course then what?

At a low valuation, less growth is needed to make an investment work, so what’s priced in?

Valuation.

At a $200 stock price they have a market cap of $326 billion.

LTM they have FCF of $5 billion (after SBC).

This is a 65x free cash flow multiple.

But they are growing very fast and operating margins have been expanding.

If grow revenues out 3 and 5 years at 35%, we get $85 billion and $155 billion in revenue.

They also guided to 35% operating margins.

With those margins, earnings could be $24 billion in 3 years for a 13.5x multiple of 2028 earnings…

Or $43 billion for a 7.5x multiple of 2030 earnings.

Now it is certainly possible they grow faster than the 35% and maybe margins are better.

But it is also possible that even if they grow a bit more, it is still peak cycle earnings.

While we don’t know exactly whether future data center demand will be as cyclical as their history suggests, a conservative investor would want to be very careful here and avoid putting a high multiple on potentially peak cycle earnings.

Frankly, it is very hard to know what the “right” multiple is because it depends how much earnings fluctuate and we are entering unknown territory here for AMD.

Historically, their multiple has ranged anywhere from 300x to substantially negative. This is because of how much earnings can swing.

This level of data center demand, with individual companies striking $60 billion deals adds an extreme level of complexity in estimating out revenues.

If for any reason OpenAI falters on their commitments, that could potentially wipe out a huge chunk of their revenues. And if OpenAI is not fulfilling their commitments then other players likely aren’t stepping up spend either.

If you assumed trough earnings were half of peak earnings, then a low double digit multiple might make sense.

At 10-15x future 2030 earnings that is 33-100% upside or a 6-15% annual return.

This multiple could be very conservative if they continued to grow at high rates.

On the other hand, if that was peak earnings and in 2030 it was clearly that spending on data center was going to slow or contract a bit, you would see earnings compress.

That operating leverage they enjoyed on the way up, would hurt them on the way down.

It is not uncommon for a semiconductor company to lose almost all of their earnings in a down cycle.

AMD’s operating income dropped 90% from 2021 to 2023.

If you thought you were owning a stock at a 10x multiple and then earnings fell 90%, that is now a 100x multiple.

More than anything I want to emphasis how tricky and imprecise any multiple you put on a cyclical company is.

A multiple is a short hand for a discounted cash flow and trying to estimate out their cash flows (even getting confidence on the low end) in far out years is as complicated as modeling the entire economy.

Nevertheless, some investors may see AMD’s $ 1trillion TAM and like their right to win and want to bet on them, hoping they can continue to outperform.

That is up to you to decide as an investor.

For more on AMD, check out this video below.

Nothing in this newsletter is investment advice nor should be construed as such. Contributors to the newsletter may own securities discussed. Furthermore, accounts contributors advise on may also have positions in companies discussed. Please see our full disclaimers here.