6 Ways AI Kills Software Stocks

Get smarter on investing, business, and personal finance in 5 minutes.

Is AI going to be the end of software?

That certainly seems to be what a lot of investors are worried about.

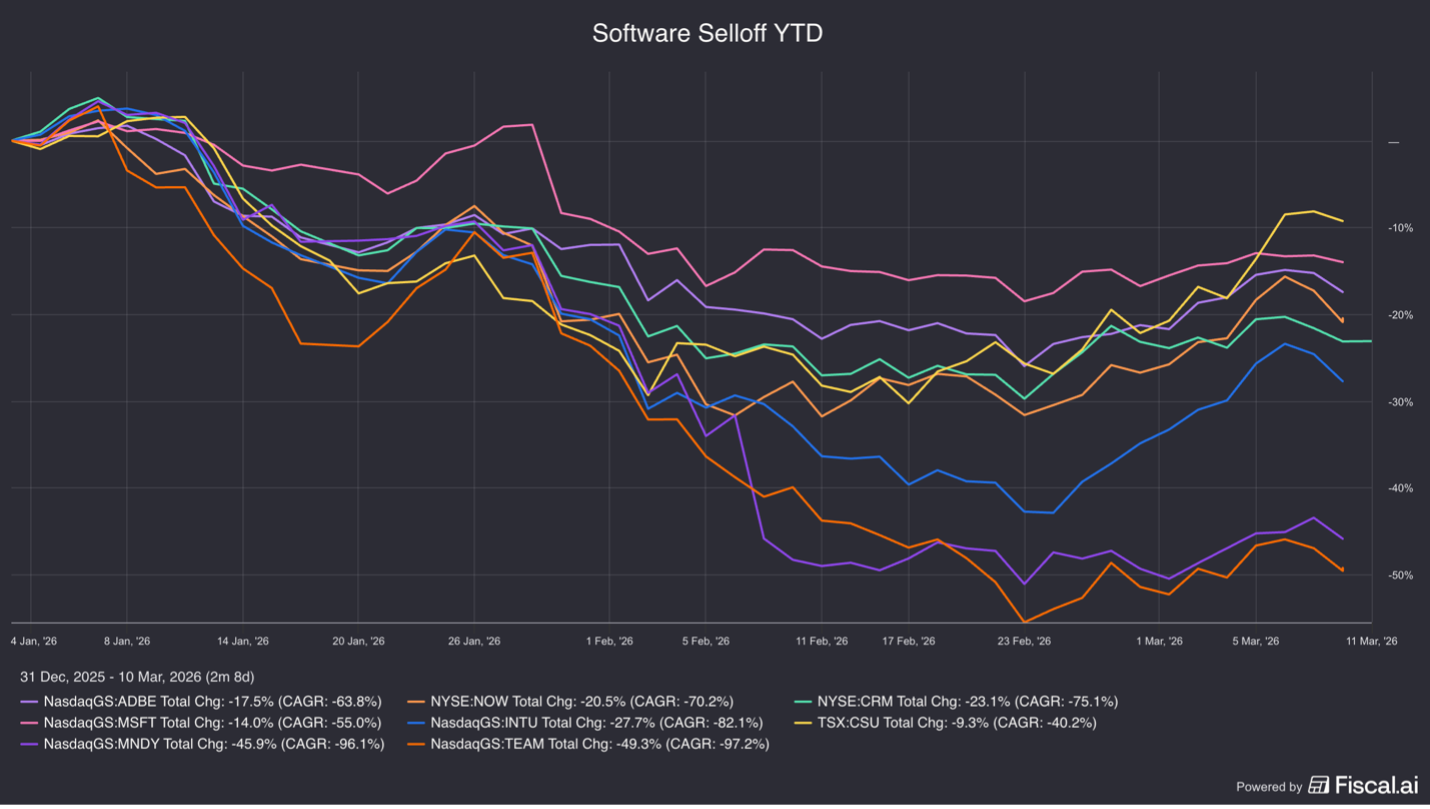

And they are selling off software stocks faster than they have ever before in response.

Many publicly traded software companies have experienced sharp drawdowns, with several stocks declining more than 50% from their peaks—one of the sector’s steepest corrections since the market sell off during Covid in 2020.

Many investors are worried that software stocks are going to be like investing in the Yellow Pages during the advent of the internet.

The internet killed a whole cadre of industries including video rental stores, print encyclopedias, music stores, and newspapers.

It seems very likely that AI is going to disrupt something and with AI having the ability to recreate entire software apps in minutes, isn’t software stocks the obvious contender?

The central question investors are grappling with is how will AI impact the software sector?

After researching several major software companies including Adobe, ServiceNow, Salesforce, Microsoft, Intuit, and Constellation Software, I came up with six ways AI could disrupt (or kill) software businesses.

We will break down each of these risks in this week’s Five Minute Money!

Risk 1: A Potential Shift in Software Pricing Models

Many enterprise software platforms charge customers on a per-seat basis, meaning companies pay according to the number of employees using the product.

If AI tools significantly increase worker productivity, organizations may require fewer employees—and therefore fewer software licenses.

For example, if a design team previously required 50 employees using Adobe’s creative software, AI-enabled workflows might allow the same output with far fewer workers.

In theory, that could reduce the number of software seats purchased.

However, the economic incentives suggest a more balanced outcome.

If AI allows a company to reduce headcount while maintaining productivity, the savings from labor reductions typically dwarf the cost of software.

In such cases, software vendors may be able to raise prices or introduce usage-based fees while still delivering net savings to customers.

I do think businesses are generally good at figuring out how to price their product as long as they're providing more value to a business.

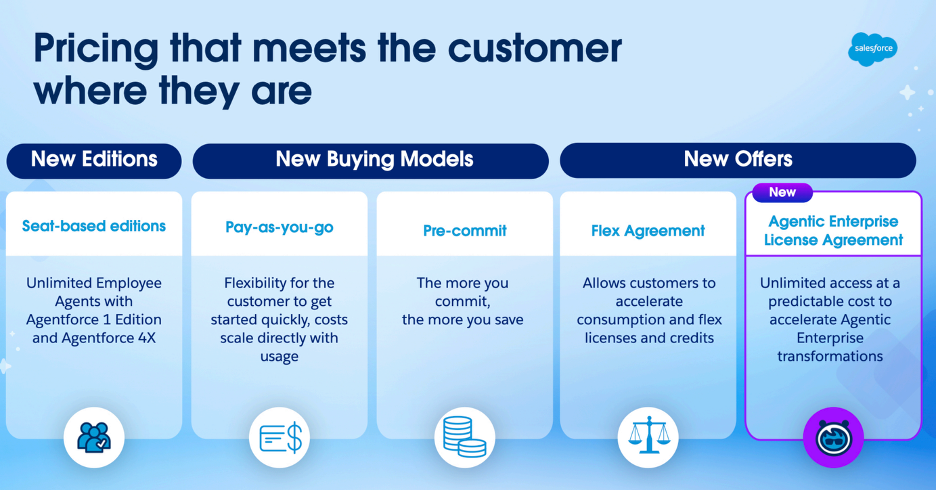

For example, if you hear what Mark Benioff of Salesforce has talked about, he says basically companies want a lot of visibility into how much they're paying for software ahead of time, because they do budgets once a year.

So instead of focusing on saving money, they are often focused on cost visibility.

And so Salesforce is talking about moving to an enterprise-wide model where you're charging the business one fee for unlimited usage of their apps, and you could do whatever you want with it.

Just generally it seems silly that if a business is providing MORE value, that it will have trouble charging for it.

Now, that doesn’t mean there won’t be some short term financial volatility as these business models transition, but just that I by and large don’t see this as a large risk.

This also isn’t the first time the industry has undergone such a transition.

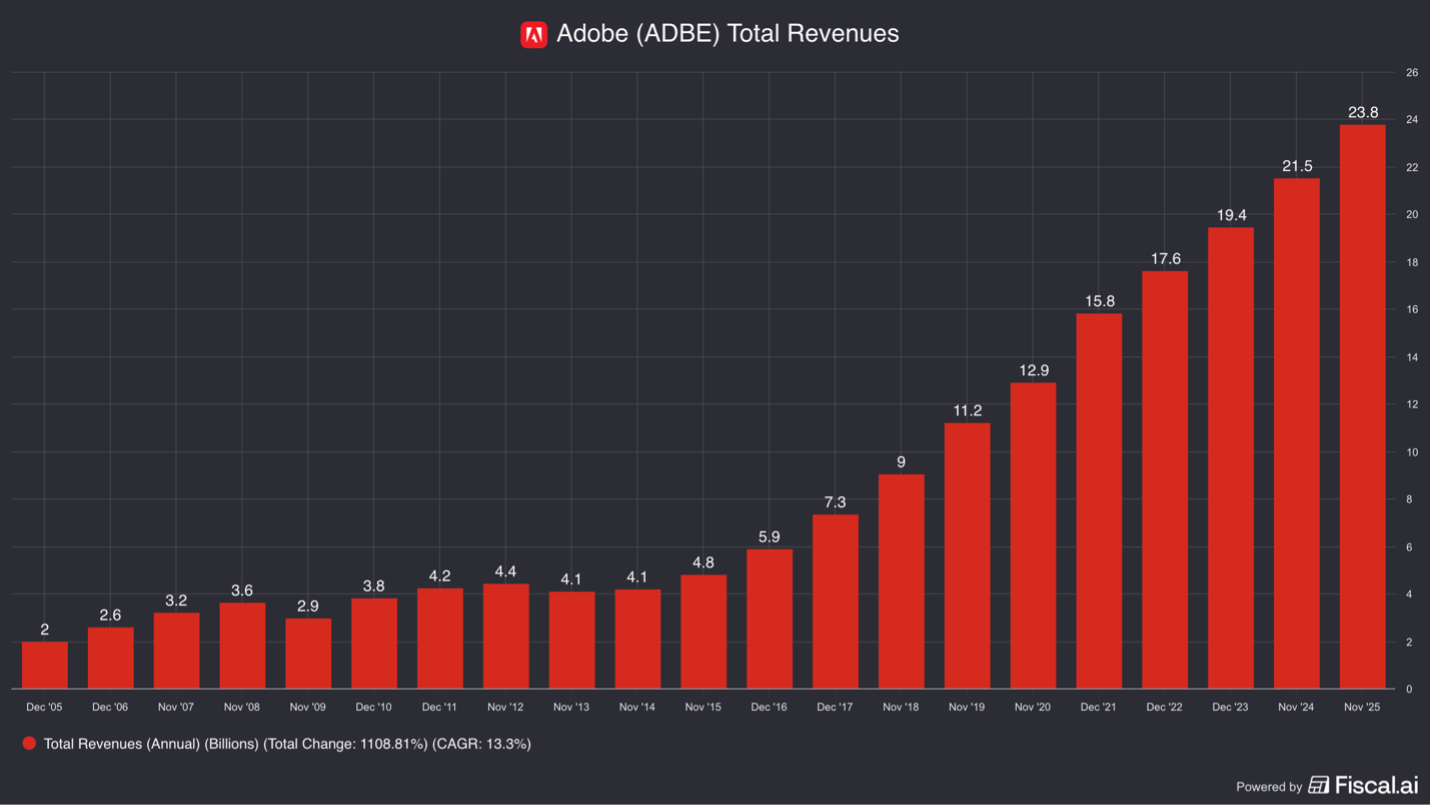

The shift from perpetual software licenses to subscription-based SaaS models initially pressured Adobe’s revenue growth but ultimately produced more predictable revenues and became a better business as a result.

Now I don’t necessarily see SaaS companies becoming a better business at the other side of this transition, but I do think it’s a manageable shift.

Risk 2: The Possibility of In-House Software Development

Another concern is that AI could enable companies to build their own internal software applications, reducing the need for third-party software.

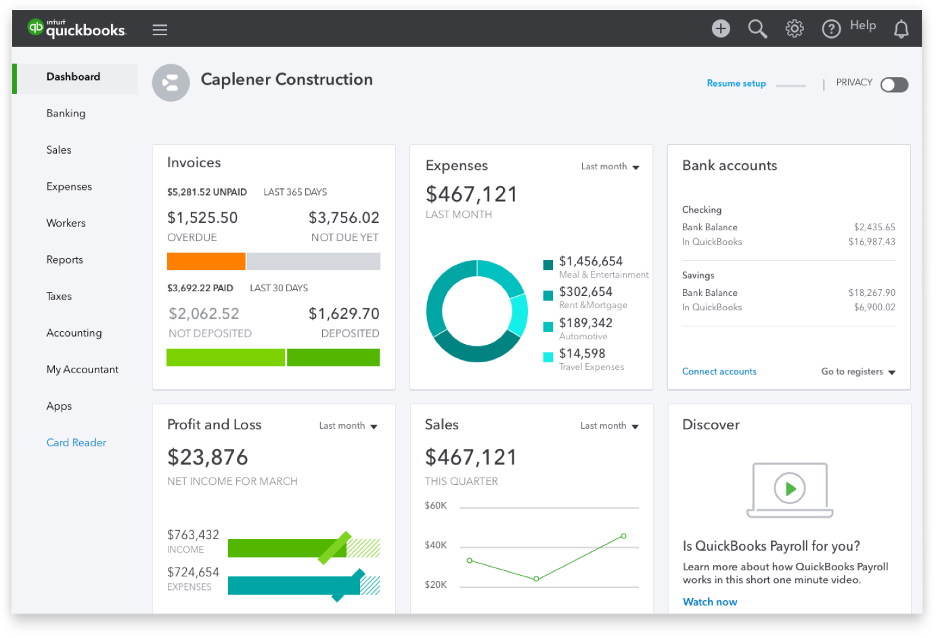

In theory, an enterprise could prompt an AI model to create an accounting system like Intuit’s QuickBooks or a CRM platform resembling Salesforce.

In practice, it is much harder than it sounds.

Software development involves more than generating code.

Applications must integrate with existing systems, maintain data integrity, comply with security standards, and receive ongoing updates.

Even minor errors can disrupt critical business processes.

Maintaining proprietary software would require companies to hire and retain engineering teams—effectively making every business run their own software development departments.

Historically, the opposite has been happening: organizations have increasingly outsourced infrastructure and software management to the cloud providers (Amazon, Microsoft, and Google)

For most businesses, software remains a relatively small expense compared with labor and operational costs, reducing the incentive to assume control of building and maintaining proprietary in-house software systems.

And so long as there are any errors in the creation of this software, it could actually prove to be much, much more costly switching software providers.

Maybe some small SMBs and individuals will have at it, but at the enterprise level, it doesn’t seem likely that Nike is going to be coding their own CRM systems—especially since a lot of the functionality they may have wanted, they already added into the software themselves with low and no code build tools.

Risk 3: Increased Competition from AI-Native Startups

AI coding tools, like Claude code, significantly reduce the cost and time required to build new applications.

This could enable startups to develop competing software products more quickly than in the past.

However, software success rarely depends solely on writing code.

Distribution, brand reputation, customer support, and integration within existing workflows are often far more important.

Enterprise software providers are deeply embedded in organizational processes.

Replacing these systems can be expensive and disruptive, even if a lower-cost alternative exists.

More to the point though, there ALREADY exists many cheaper software alternatives. For almost every software product imaginable, there are others that are cheaper.

TurboTax has competitors that offer FREE Federal Tax filing…

And still TurboTax has the most market share.

Companies compete on far more than price and these AI-native software companies would have to figure out what they offer the customer beyond a lower price.

The customer doesn’t care about how the software is made.

It cares about the benefit to themselves and just because it is “AI made” doesn’t mean anything to the customer…

It actually could be a negative because it is at risk of having more bugs and they also probably have less support as well.

Moreover, established companies are not standing still.

Many incumbents are rapidly integrating AI capabilities into their existing products, often launching new AI features that rank among their fastest-growing offerings.

As a result, while AI may accelerate product development, it does not address any of the other elements needed to create a business like distribution and support.

R&D is but one line item for a business and it going down only minimally changes the competitive landscape.

Risk 4: The “Token Risk”

A more technical concern relates to how AI models are priced.

When software companies integrate AI capabilities, they often rely on external models provided by OpenAI, Anthropic, or Google’s Gemini.

Each interaction with these models incurs a cost measured in computational units known as tokens.

Since a lot of the software companies are incorporating AI into their products, with each use they will charge the end customer for the “tokens” used (whether through usage fees or embedded in the subscription price).

If AI providers significantly increase token prices, software companies could face rising operating costs.

However, since there are many AI models to pick from right now, any individual one has limited pricing power.

So unless one model is so much better than all of the other ones, the software companies can pit the AI model companies against each other to keep pricing down.

Furthermore, the AI models themselves want the software company’s distribution because they want their models used so they can collect data and make it better.

Right now the AI model companies are focused on growth more than profit, so are unlikely to jack up the costs of tokens.

However, if that changes and investors in Anthropic and OpenAI start to demand to see more profits, they could raise the price of tokens.

But again, their being multiple different models, and opensource ones as well, will limit their pricing power. The software companies can also route different requests to different models based on price, using maybe an opensource model like DeepSeek for the simpler requests.

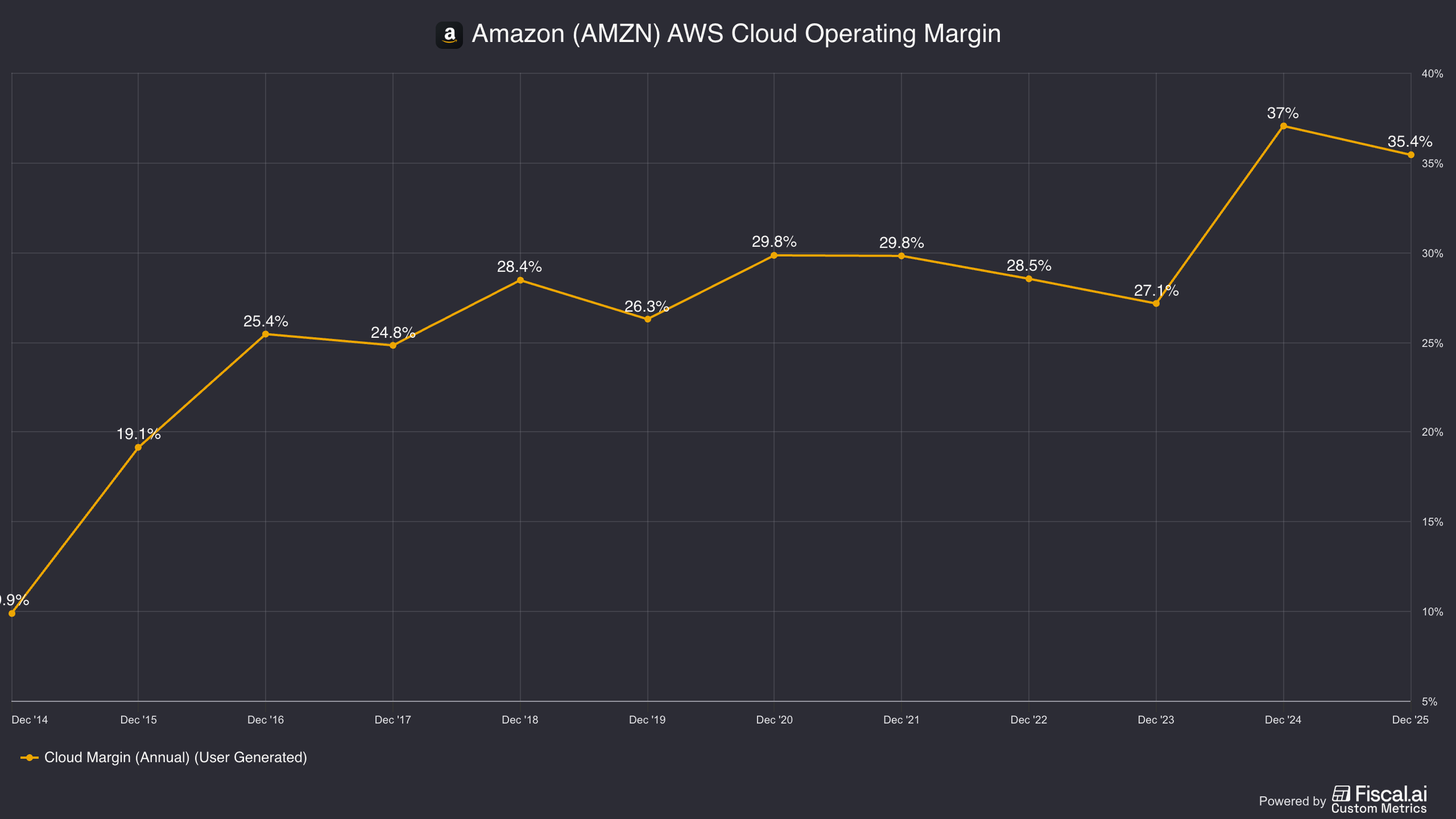

This doesn’t mean the AI model companies can’t make a good margin, just like the dynamic between AWS, GCP, and Azure, but it will limit them from making egregious profits.

The more the AI models become commoditized, the less pricing power they have in short…

And right now, it looks like Anthropic, OpenAI, and Gemini can be swapped out for many different tasks.

Risk 5: Competition Among Incumbent Software Platforms

The most immediate competitive risk will not come from startups or AI models in my opinion, but from existing software companies expanding into each other’s markets.

AI dramatically lowers the cost of building new features and applications.

As a result, large platforms are increasingly attempting to broaden their product suites.

The players that are in the best position to take advantage of how easy it is now to make software is existing software companies.

They can roll out new applications and just cross-sell them to existing customers.

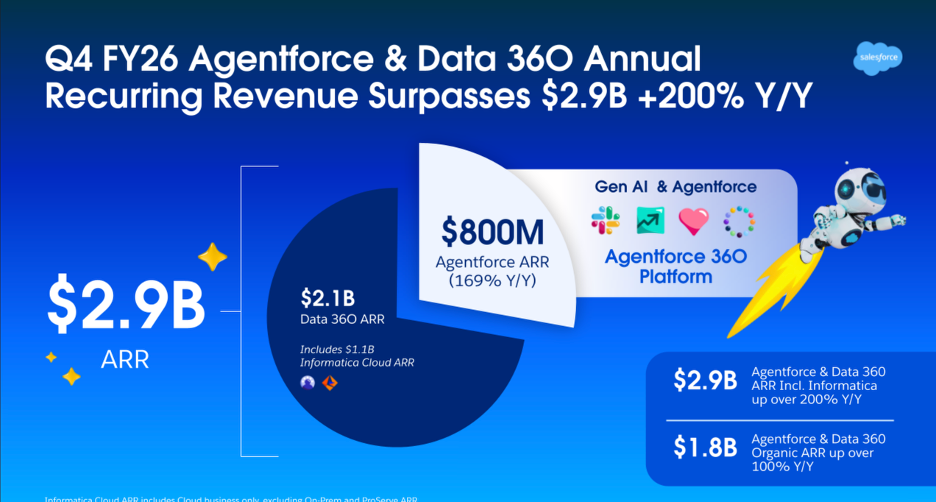

For example, ServiceNow who historically focused on IT service management is moving into customer relationship management (CRM), an area traditionally dominated by Salesforce.

Salesforce, in turn, is expanding into IT service management with Agentforce IT Service.

These companies are positioning themselves as centralized AI orchestration platforms that manage data access, security permissions, and workflow automation across the enterprise.

Basically every software company wants to become the platform layer that does all of the AI work. And in order to further entrench themselves in each company, they are building new software products to expand their the workflows they touch in a business.

Ultimately, companies like Salesforce or ServiceNow want to be the platform that employees interact with for basically all of their work, while equipping AI to help them get far more work done than prior.

Because these incumbents already possess customer relationships, distribution channels, and extensive data access, they are better positioned to expand into adjacent markets than a theoretical new entrant would be.

I think this means that a lot of “point solution” SaaS could be at risk as the big software co.’s roll out new competing products that can be bundled with their existing software solutions.

In my opinion, AI makes the SaaS competition from existing software companies more formidable, not new ones that have yet to be created.

Risk 6: The Existential Risk (Artificial General Intelligence)

The final risk is the most speculative but potentially the most consequential: the development of artificial general intelligence (AGI) or superintelligent AI.

This is the creation of an AI so good that it is smarter than a team of the smartest humans and makes zero mistakes.

In such a scenario, it is hard to argue that anyone would need software.

(Businesses probably won’t need people either… but that is another topic…)

These super AI can just go right to the unstructured database, pull what it needs, make business logic on the fly, create user dashboards, and even anticipate work before a human asks for it.

It can basically bypass all traditional software application layers.

However, such an AI couldn’t be just nearlyperfect, it would have to be actually perfect.

And if this all sounds a bit unrealistic, that is because we are no where near there today.

Yes, AI is super impressive, but we are talking about a scenario where it can do everything with zero mistakes.

Any mistakes at all and businesses are far better off relying on their deterministic software that doesn’t accidently delete all of the data to save on storage costs or “guess” at how much to invoice a customer.

And until the AI is good that it can do everything without any oversight whatsoever, then business’s are going to want guardrails, permissioning, and audit trails so it can control the AI and see what it has done.

Companies like Salesforce, ServiceNow, and Inuit are creating guardrail systems that keeps the AI from going “rogue”.

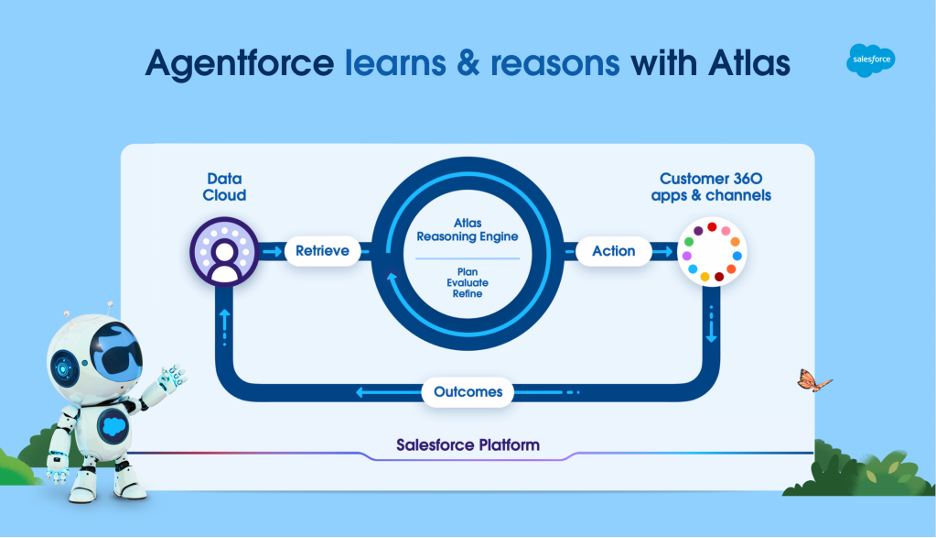

For example, Salesforce has its Atlas Reasoning Model.

They essentially plus into LLM models from OpenAI, Anthropic, and Google and then interpret requests and create business logic.

Business logic is a set of sequences that are deterministic.

That is a big difference between how LLM models currently operate, which are probabilistic.

And until we get a trustworthy Super AI, businesses are going to want software to keep the AI contained.

How Markets Price Existential Risk

Financial markets often struggle to value existential risks because they are inherently binary: either the outcome occurs, or it does not.

Right now the software sell-off, in my opinion, is because there is this existential risk that is overhanging and there is no real way to apply a risk premium to it.

How much do you raise your hurdle rate to account for a nuclear war leading to extinction?

If it seems like a silly example, it is because we don’t take it into account.

Sometimes a new risk pops up that can’t be priced (like Super Intelligence) and the markets struggle to figure out how to price it.

I also think that taking an opinion on such existential risks for a business is often when an investor can find an interesting opportunity to set themselves apart from the crowd.

When Warren Buffett invested in The Washington Post during the early 1970s, the company faced legal and political threats related to the Pentagon Papers.

There was a real risk that the Washington Posts staff were criminally indicted.

President Nixon was also trying to get the FCC to challenge their television licenses, which is where they made a lot of their profit at the time.

When Buffett made his Washington Post investment, the lesson wasn’t there was no way this could fail.

Instead there was a very specific and obvious way the investment could a have gone poorly, but he simply didn’t believe that would happen.

He took an opinion on the existential risk that was floating above the business like a Damocles sword, believing it wouldn’t fall.

A similar dynamic existed in Buffett’s investment in American Express during the “Salad Oil Scandal.”

At the time, the company faced liabilities large enough to threaten its solvency.

Buffett knew there was a settlement though and thought it would be accepted.

If he was wrong about that, then this investment also could have been disastrous.

In both cases their was a risk that threatened the very existence of the business and Buffett took an opinion on it. He was right and made a lot of money on both of those investments.

The Super AI risk is very similar.

It could potentially kill software companies.

Or it could simply be a fear that is causing market dislocation and thus an opportunity to you as an investor to take an opinion and potentially reap a large reward...

But just because Buffett was right, doesn’t mean you will be.

For more on the 6 Ways AI Kills Software Stocks, check out this video below.

Nothing in this newsletter is investment advice nor should be construed as such. Contributors to the newsletter may own securities discussed. Furthermore, accounts contributors advise on may also have positions in companies discussed. Please see our full disclaimers here.